|

As they won’t be crawled, they won’t be included within the ‘internal’ tab or the XML Sitemap. If there are sections of the website or URL paths that you don’t want to include in the XML Sitemap, you can simply exclude them in the configuration pre-crawl.There’s a few ways to make sure they are not included within the XML Sitemap – You shouldn’t include URLs with session ID’s (you can use the URL rewriting feature to strip these during a crawl), there might be some URLs with lots of parameters that are not needed, or just sections of a website which are unnecessary. If a page can be reached by two different URLs, for example and (and they both resolve with a ‘200’ response), then only a single preferred canonical version should be included in the sitemap. Outside of the above configuration options, there might be additional ‘internal’ HTML 200 response pages that you simply don’t want to include within the XML Sitemap.įor example, you shouldn’t include ‘duplicate’ pages within a sitemap. You can see which URLs are ‘noindex’, ‘canonicalised’ or have a rel=“prev” link element on them under the ‘Directives’ tab and using the filters as well. You can see which URLs have no response, are blocked, or redirect or error under the ‘Responses’ tab and using the respective filters. This can all be adjusted within the XML Sitemap ‘pages’ configuration, so simply select your preference. Pages which are blocked by robots.txt, set as ‘noindex’, have been ‘canonicalised’ (the canonical URL is different to the URL of the page), paginated (URLs with a rel=“prev”) or PDFs are also not included as standard.

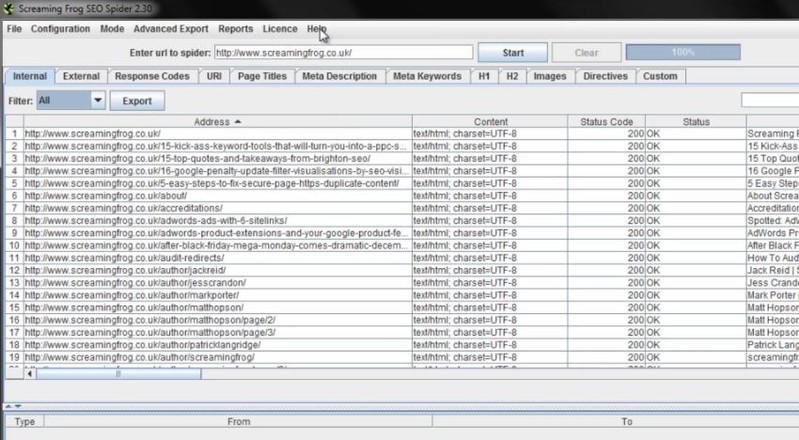

However, you can select to include them optionally, as in some scenarios you may require them. So you don’t need to worry about redirects (3XX), client side errors (4XX Errors, like broken links) or server errors (5XX) being included in the sitemap. Only HTML pages included in the ‘internal’ tab with a ‘200’ OK response from the crawl will be included in the XML sitemap as default. This will open up a number of sitemap configuration options. When the crawl has reached 100% and finished, click the ‘XML Sitemap’ option under ‘Sitemaps’ in the top level menu. Open up the SEO Spider, type or copy in the website you wish to crawl in the ‘enter url to spider’ box and hit ‘Start’. The next steps to creating a XML Sitemap are as follows – If you’d like to crawl more than 500 URLs, you can buy an annual licence, which removes the crawl limit and opens up the configuration options. You can download via the buttons in the right hand side bar. To get started, you’ll need to download the SEO spider which is free in lite form, for up to 500 URLs. This tutorial walks you through how you can use the Screaming Frog SEO Spider to generate XML Sitemaps. Screaming Frog SEO Spider is a software application that was developed with Java, in order to provide users with a simple means of gathering SEO information about any given site, as well as generate multiple reports and export the information to the HDD.How To Create An XML Sitemap Using The SEO Spider The interface you come across might seem a bit cluttered, as it consists of a menu bar and multiple tabbed panes which display various information. However, a comprehensive User Guide and some FAQs are available on the developer’s website, which is going to make sure that both power and novice users can easily find their way around it, without encountering any kind of issues. View internal and external links, filter and export them It is possible to analyze a specified URL, and view a list of internal and external links in separate tabs. View further details and graphs, and generate reports The first come along with details such as address, type of content, status code, title, meta description, keywords, size, word count, level, hash and external out links, while the latter only reveals info such as address, content, status, level and inlinks.īoth can be filtered according to HTML, JavaScript, CSS, images, PDF, Flash or other coordinates, while it is possible to export them to a CSV, XLS or XLSX format. In addition to that, you can check the response time of multiple links, view page titles, their occurrences, length and pixel width. It is possible to view huge lists with meta keywords and their length, headers and images. Graphical representations of certain situations are also available in the main window, along with a folder structure of all SEO elements analyzed, as well as stats pertaining to the depth of the website and average response time. It is possible to use a proxy server, create a site map and save it to the HDD using an XML extension and generate multiple reports pertaining to crawl overview, redirect chains and canonical errors.

To conclude, Screaming Frog SEO Spider is an efficient piece of software for those which are interested in analyzing their website from a SEO standpoint.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed